I asked an online tracking company for all of my data and here's what I found

It’s 15:10 pm on April 18, 2018. I’m in the Privacy International office, reading a news story on the use of facial recognition in Thailand. On April 20, at 21:10, I clicked on a CNN Money Exclusive on my phone. At 11:45 on May 11, 2018, I read a story on USA Today about Facebook knowing when teen users are feeling insecure.

How do I know all of this? Because I asked an advertising company called Quantcast for all of the data they have about me.

Most people will have never heard of Quantcast, but Quantcast will certainly have heard about them. The San Francisco-based company collects real-time insights on audience characteristics across the internet and claims that it can do so on over 100 million websites.

Quantcast is just one of many companies that form part of a complex back-end systems used to direct advertising to individuals and specific target audiences.

The (deliberately) blurred screenshot below shows what this looks like for a single person: over the course of a single week, Quantcast has amassed over 5300 rows and more than 46 columns worth of data including URLs, time stamps, IP addresses, cookies IDs, browser information and much more.

Seeing that the company has such granular insight into my online habits feels quite unnerving. Yet the websites, where Quantcast has tracked my visit, are just a small fraction of what the company knows about me. Quantcast has also predicted my gender, my age, the presence of children in my household (in number of children and their ages), my education level, and my gross yearly household income in US Dollars and in British Pounds.

Quantcast has also placed me in much more fine-grained categories whose names suggest that the data was obtained by data brokers like Acxiom and Oracle, but also MasterCard and credit referencing agencies like Experian.

Some of the categories are uncannily specific. My MasterCard UK shopping interests, for instance, includes travel and leisure to Canada (I have in fact been to Canada recently for work) and frequent transactions in Bagel Restaurants (I can remember one night out where I’ve purchased quite a few bagels). Experian UK classifies me according to my assumed financial situation (for some inexplicable reason I’m classified as” City Prosperity:World-Class Wealth”), the data broker Acxiom even placed me in a category called “Alcohol at Home Heavy Spenders” (was it because I went shopping for a birthday party at home?), and a company called Affinity Answers thinks I have a social affinity with the consumer profile “Baby Nappies & Wipes” (very, very wrong).

Ads seem trivial, but the sheer scope and granularity of the data that is used to target people ever more precisely is anything but trivial. Looking at these categories reminds me of what the technology critic Sara Watson has coined the uncanny ‘valley of personalisation’. It is impossible for me to understand why I am classified and targeted the way I am; it is impossible to reconstruct which data any of these segmentations are based on and - most worryingly - it is impossible for me to know whether this data can (and is) being used against me.

The murky world of third-party tracking

Quantcast is one of countless of so-called “third-parties” that monitor people’s behaviour online. Because companies like Quantcast (just like Google, and Facebook) have trackers on so many websites and apps, they are able to piece together your activity on several different websites throughout your day.

My Quantcast data, for instance, gives an eerily specific insight into my work life at Privacy International. From my browsing history alone, companies like Quantcast don’t just know that I work on technology, security, and privacy – my news interests reveal what exactly it is that I am working on at any point in time. My Quantcast data even reveals that I have a personal blog on Tumblr.

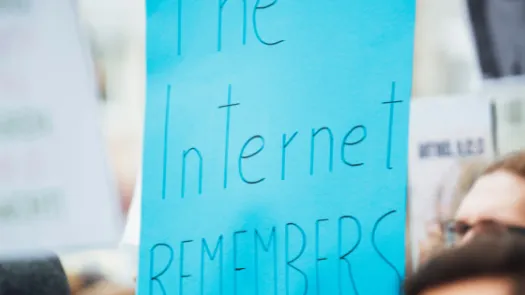

For each and every single one of these links, Quantcast claims that it has obtained my consent to be tracked – but that is only part of the story. Quantcast has no direct relationship with the people whose data they collect. Therefore, most people have never heard of the company’s name, do not know that they process their data and profile them, whether this data is accurate, for what purposes they are using it, or with whom it is being shared and the consequences of this processing.

Quantcast claims that it has obtained my (and likely your) consent because somewhere, on some website, I must have mindlessly clicked “I ACCEPT”. The reason that I did this is because so-called “consent forms” are specifically designed to make you to click “I ACCEPT”, simply because it is incredibly tedious and unnecessarily time-consuming to not accept tracking. Privacy International believes that this is in violation of the EU’s General Data Protection Regulation (GDPR) which requires that consent is freely given, unambiguous, and specific. The UK Information Commissioners Office argues that “genuine consent should put individuals in charge, build trust and engagement, and enhance your reputation.”

Quantcast sells such a “consent solution” to websites and publishers like news websites. Its design in a perfect example of such ‘dark patterns’ that incentivise people to “agree” to highly-invasive privacy practices – a widespread practice that our friends at the Norwegian Consumer Council have outlined in this excellent report.

Only after I clicked on the incredibly small “Show Purposes” and “See full vendor list” in the next window am I able to fully grasp what clicking “I ACCEPT” really entails: namely that I “consent” to hundreds of companies to use my data in ways that most people would find surprising. If I clicked “I ACCEPT” on the window above, I would have agreed to a company called Criteo to match my online data to offline sources and to link different devices I use.

In fact, Quantcast’s deceptive design is so effective, that the company proudly declares that it achieves a 90 percent consent rate on websites that use its framework.

Data brokers and the hidden data ecosystem

The fact that countless companies are tracking millions of people around the web and on their phones is disturbing enough, but what is even more disturbing about my Quantcast data is the extent to which the company relies on data brokers, credit referencing agencies, and even credit card companies in ways that are impossible for the average consumer to know about or escape.

Advertising companies and data brokers have been quietly collecting, analysing, trading, and selling data on people for decades. What has changed is the granularity and invasiveness at which this is possible.

Data brokers buy your personal data from companies you do business with; collect data such as web browsing histories from a range of sources; combine it with other information about you (such as magazine subscriptions, public government records, or purchasing histories); and sell their insights to anyone that wants to know more about you.

Even though these companies are on the whole non-consumer facing and hardly household names, the size of their data operations is astounding. Acxiom’s Annual report of 2017, for instance, states that they offer data “on approximately 700 million consumers worldwide, and our data products contain over 5,000 data elements from hundreds of sources.”

Part of the problem is that this data can be used to target, influence, and manipulate each and every one of us ever more precisely. How precisely? A few years ago, an advertising company from Massachusetts in the US targeted “abortion-minded women” with anti-abortion messages while there were in hospital. Laws in the US are very different from what is legal in the EU, yet the example shows what it technically possible: to target very precise groups of people, at particular times and particular places. This is the reality of what targeted advertisement looks like today.

While uncannily accurate data can be used against us, inaccurate data is no less harmful, especially when data that most of us don’t even know exists and have very little control over is used to make decisions about us. An investigation by Big Brother Watch in the UK, for instance, showed how Durham Police in the UK were feeding Experian’s Mosaic marketing data into their ‘Harm Assessment Risk Tool’, to predict whether a suspect might be at low, medium or high risk of reoffending in order to guide decisions as to whether a suspect should be charged or released onto a rehabilitation program. Durham Police is not the only police force in England and Wales that uses Mosaic service. Cambridgeshire Constabulary, and Lancashire Police are listed as having contracts with Experian for Mosaic.

How Privacy International is challenging the hidden data industry

If you have been following the Cambridge Analytics and Facebook scandals over the past few months, you might get the impression that privacy scandals are about bad actors misusing well-intended platforms during major elections, who are guilt of responding too slowly. Our interpretation has always been that we are faced with a much more systemic problem that lies at the very core of the current ways in which advertisers, marketers, and many other exploit people’s data.

The European General Data Protection Regulation, which entered into force on May 25, 2018 strengthens rights of individuals with regard to the protection of their data, imposes more stringent obligations on those processing personal data, and provides for stronger regulatory enforcement powers.

That’s why Privacy International has filed complaints against seven data brokers (Acxiom, Oracle), ad-tech companies (Criteo, Quantcast, Tapad), and credit referencing agencies (Equifax, Experian) with data protection authorities in France, Ireland, and the UK.

These companies do not comply with the Data Protection Principles, namely the principles of transparency, fairness, lawfulness, purpose limitation, data minimisation, and accuracy. They also do not have a legal basis for the way they use people's data, in breach of GDPR.

The world is being rebuilt by companies and governments so that they can exploit data. Without urgent and continuous action, data will be used in ways that people cannot now even imagine, to define and manipulate our lives without us being to understand why or being able to effectively fight back. We urge the data protection authorities to investigate these companies and to protect individuals from the mass exploitation of their data, and we encourage journalists, academics, consumer organisations, and civil society more broadly, to further hold these industries to account.

This piece was written by PI's Data Exploitation Programme Lead Frederike Kaltheuner

![[A screengrab of the Data Subject Access Request I have obtained from Quantcast]](/sites/default/files/styles/middle_column_small/public/2018-11/Screen%20Shot%202018-11-07%20at%2011.39.01.png?itok=QH7kjsTi)

![[A screengrab of the Data Subject Access Request PI obtained from Quantcast]](/sites/default/files/styles/middle_column_small/public/2018-11/Screen%20Shot%202018-11-08%20at%2012.15.42.png?itok=QF1CiJYy)

![[A screengrab of the Data Subject Access Request I have obtained from Quantcast]](/sites/default/files/styles/middle_column_small/public/2018-11/Screen%20Shot%202018-11-07%20at%2011.43.09.png?itok=lA1cBLP_)

![[A screengrab of the Data Subject Access Request I have obtained from Quantcast]](/sites/default/files/styles/middle_column_small/public/2018-11/Picture1.png?itok=CWqrB1tX)

![[A screengrab of the Data Subject Access Request I have obtained from Quantcast]](/sites/default/files/styles/middle_column_small/public/2018-11/Picture2.png?itok=WXrjhuuk)