Search

Content type: Examples

In 2018 a report from the Royal United Services Institute found that UK police were testing automated facial recognition, crime location prediction, and decision-making systems but offering little transparency in evaluating them. An automated facial recognition system trialled by the South Wales Police incorrectly identified 2,279 of 2,470 potential matches. In London, where the Metropolitan Police used facial recognition systems at the Notting Hill Carnival, in 2017 the system was wrong 98% of…

Content type: Long Read

Photo Credit: Max Pixel

The fintech sector, with its data-intensive approach to financial services, faces a looming problem. Scandals such as Cambridge Analytica have brought public awareness about abuses involving the use of personal data from Facebook and other sources. Many of these are the same data sets that the fintech sector uses. With the growth of the fintech industry, and its increase in power and influence, it becomes essential to interrogate this use of data by the…

Content type: Report

The use of biometric technology in political processes, i.e. the use of peoples’ physical and behavioural characteristics to authenticate claimed identity, has swept across the African region, with 75% of African countries adopting one form or other of biometric technology in their electoral processes. Despite high costs, the adoption of biometrics has not restored the public’s trust in the electoral process, as illustrated by post-election violence and legal challenges to the results of…

Content type: Examples

In January 2018 the Cyberspace Administration of China summoned representatives of Ant Financial Services Group, a subsidiary of Alibaba, to rebuke them for automatically enrolling its 520 million users in its credit-scoring system. The main complaint was that people using Ant's Alipay service were not properly notified that enrolling in the credit-scoring system would also grant Ant the right to share their personal financial data, including information about their income, savings, and…

Content type: Examples

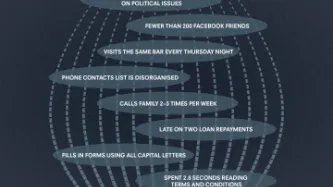

In 2015, a small number of Silicon Valley start-ups began experimenting with assessing prospective borrowers in developing countries such as Kenya by inspecting their smartphones. Doing so, they claimed, enabled them to charge less in interest than more traditional microlenders, since many of their target customers lack traditional credit ratings. The amount of data on phones - GPS coordinates, texts, emails, app data, and more obscure details such as how often the user recharges the battery,…

Content type: Examples

Because banks often decline to give loans to those whose "thin" credit histories make it hard to assess the associated risk, in 2015 some financial technology startups began looking at the possibility of instead performing such assessments by using metadata collected by mobile phones or logged from internet activity. The algorithm under development by Brown University economist Daniel Björkegren for the credit-scoring company Enterpreneurial Finance Lab was built by examining the phone records…

Content type: News & Analysis

Privacy International is celebrating Data Privacy Week, where we’ll be talking about privacy and issues related to control, data protection, surveillance and identity. Join the conversation on Twitter using #dataprivacyweek.

If you were looking for a loan, what kind of information would you be happy with the lender using to make the decision? You might expect data about your earnings, or whether you’ve repaid a loan before. But, in the changing financial sector, we are seeing more and more…